All published articles of this journal are available on ScienceDirect.

Evaluating Deep Learning AI for Periapical Lesion Detection Across Panoramic, Periapical, and CBCT Radiographs: A Systematic Review

Abstract

Introduction

Early detection of periapical lesions is critical for timely clinical intervention. Artificial Intelligence (AI) technology, particularly deep learning models, offers a promising approach for identifying such lesions. This study systematically reviews the application of deep learning in detecting periapical lesions across three radiographic modalities: periapical radiography, panoramic radiography, and Cone-Beam Computed Tomography (CBCT).

Methods

This study employs a systematic literature review methodology, adhering to the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) guidelines to ensure methodological rigor and transparency.

Results

The results of this study demonstrate that integrating AI with periapical, panoramic, and CBCT enhances diagnostic accuracy and efficiency in the detection of periapical lesions.

Discussion

The integration of artificial intelligence with panoramic, periapical, and CBCT imaging modalities significantly enhances the diagnostic accuracy and operational efficiency in identifying periapical lesions.

Conclusion

This study found that AI performs better at evaluating mandibular periapical lesions than at evaluating maxillary periapical lesions. Furthermore, periapical radiography is more sensitive than panoramic radiography for detecting smaller periapical lesions, whereas CBCT provides the highest diagnostic accuracy for both periapical and odontogenic cystic lesions. The integration of AI with radiographic technologies shows significant potential to enhance diagnostic precision, optimize treatment planning, and improve patient outcomes in endodontic practice.

1. INTRODUCTION

Artificial Intelligence (AI) refers to the capability of computational systems to emulate human cognitive functions in performing specialized tasks. Within the healthcare domain, AI technologies have advanced significantly and are increasingly being implemented across medical and dental disciplines. In dentistry specifically, AI applications are frequently deployed through Deep Learning (DL) methodologies, a subset of machine learning that has demonstrated particular efficacy in dental diagnostics and image analysis [1].

DL, a specialized subset of Artificial Intelligence (AI), has demonstrated significant utility in diagnostic applications, particularly within dentomaxillofacial radiology. Current evidence indicates its substantial potential to enhance both accuracy and efficiency in detecting periapical lesions across multiple radiographic modalities. The fundamental advantage of DL lies in its capacity for autonomous feature extraction from raw data, effectively emulating human cognitive processes without requiring explicit programming. Through this mechanism, AI systems can systematically analyze datasets to perform critical functions, including image classification, enhancement, and segmentation. Notably, DL's sophisticated feature extraction capabilities serve to reduce data dimensionality, thereby transforming complex patterns into quantifiable parameters that can be effectively processed by machine learning algorithms [1].

Among various DL architectures, Convolutional Neural Networks (CNNs) represent a specialized class of artificial neural networks particularly suited for image analysis tasks. In medical imaging applications, CNNs have demonstrated exceptional performance in fundamental operations including lesion detection, tissue segmentation, and pathological classification [2]. Regarding detection capabilities, CNN algorithms precisely localize target structures in radiographic images by generating bounding boxes and assigning appropriate diagnostic labels to identified objects.

Periapical lesions are pathological changes that occur in the tissues surrounding the apex of a tooth, most commonly as a consequence of pulp necrosis or bacterial infection. These lesions are frequently identified radiographically as areas of radiolucency. İçöz et al. described periapical inflammatory lesions as a local response in the bone around the root apex due to pulp necrosis [3]. Early and accurate detection of such lesions is essential for effective treatment planning, as delayed diagnosis can lead to disease progression and adverse clinical outcomes. Bayrakdar et al. emphasized the diagnostic importance of periapical radiolucency, noting that it is a key feature of apical periodontitis [4].

Panoramic radiography offers several advantages, including comprehensive visualization of maxillofacial structures, a simplified acquisition technique, applicability for patients with trismus or limited oral opening, and a reduced radiation dose compared to CBCT. However, this modality presents notable limitations: 1)inferior spatial resolution compared with periapical radiography, 2) non-uniform magnification across the image, compromising measurement reliability, and 3) higher radiation exposure than periapical radiography.

Cone-Beam Computed Tomography (CBCT) offers significant diagnostic advantages, such as: 1) distortion-free imaging with multiplanar reconstruction capability (axial, sagittal, coronal, and 3D views), 2) minimal artifact production [5], and 3) high-resolution three-dimensional visualization of anatomical structures with lower radiation exposure than conventional CT. However, CBCT presents notable limitations when compared to 2D radiography, including increased radiation dose, higher operational costs, and prolonged interpretation time due to the substantial volume of three-dimensional anatomical data requiring comprehensive analysis. The extensive datasets generated by CBCT, while diagnostically valuable, require more meticulous evaluation compared to conventional two-dimensional imaging modalities.

While panoramic, periapical, and CBCT are established modalities for assessing dental structures and surrounding tissues, current evidence lacks consensus on the optimal imaging modality for Artificial Intelligence (AI)-assisted radiodiagnosis of periapical lesions. Each modality presents distinct advantages and limitations that may differentially influence machine learning model performance, necessitating further comparative investigation to determine their respective efficacies in AI applications.

As such, this systematic review aims to comprehensively evaluate and synthesize the current evidence on the application of deep learning models for periapical lesion detection across three radiographic modalities: periapical, panoramic, and CBCT. Through rigorous analysis of the available scientific literature, this study seeks to establish a standardized framework for comparing the diagnostic performance of these imaging techniques when integrated with artificial intelligence algorithms.

Accordingly, a critical understanding of each radiographic modality's comparative advantages and limitations is essential for evaluating how DL models might enhance the diagnostic sensitivity and specificity of periapical lesion detection. This systematic review synthesizes current evidence from the scientific literature to: 1) establish standardized performance benchmarks across imaging modalities, 2) identify optimal DL architectures for different radiographic techniques, and 3) delineate future research directions. The findings are anticipated to serve as a foundational reference for both dental researchers developing next-generation DL algorithms and clinicians seeking to implement AI-assisted diagnostic systems in endodontic practice.

2. MATERIALS AND METHODS

This systematic review, conducted from March to May 2024 following PRISMA guidelines, employed the PICO framework to evaluate radiographic modalities for periapical lesion detection. The population comprised studies on periapical lesions, with interventions analyzing CBCT, periapical, and panoramic radiography. Comparisons assessed relative efficacy in early detection, while outcomes determined the optimal modality for AI-assisted diagnosis using deep learning models, particularly CNNs. Electronic database searches systematically identified literature examining AI integration with these modalities to compare diagnostic performance in lesion identification.

The literature search was systematically conducted across three major databases (PubMed, Scopus, and EBSCO) using the following keyword combinations: 1) “periapical lesion” AND “CBCT”, 2) “periapical lesion” AND “periapical radiography”, AND 3) “periapical lesion” AND “panoramic radiography”. This search strategy yielded an initial pool of 20 relevant articles, which were subsequently subjected to a rigorous systematic review according to the predefined protocol.

The comprehensive database search was executed in April 2024 across PubMed, Scopus, and EBSCO using predefined keyword combinations. An initial filter limited results to publications from 2019 to 2024, yielding 121 articles. After applying the inclusion criteria and removing duplicates, 72 articles remained. Following rigorous title/abstract screening, 20 studies met all eligibility criteria for final inclusion as shown in Table 1 below.

| Database of Scientific literature |

Search Strategy | Number of articles |

|---|---|---|

| PubMed https://pubmed.ncbi.nlm.nih.gov/ | 1 | |

| Scopus https:/[5]w.scopus.com/ | 1 | |

| Ebsco https://www.ebsco.com/products/research-databases/e-journals-database |

#1 periapical lesion AND CBCT #2 periapical lesion AND periapical #3 periapical lesion AND panoramic |

18 |

This systematic review protocol was registered in the International Prospective Register of Systematic Reviews (PROSPERO) under the registration number: CRD420251112376.

2.1. Inclusion and Exclusion Criteria

2.1.1. The Study Selection Adhered to the following Inclusion Criteria

(1) Original research articles evaluating AI applications, specifically deep learning models including Convolutional Neural Networks (CNNs), for periapical lesion detection across three radiographic modalities: periapical radiography, panoramic radiography, and CBCT;

(2) Studies indexed in major scientific databases (PubMed, Scopus, Web of Science Thomson Reuters, EBSCO, and Directory of Open Access Journals [DOAJ]);

(3) Publications available as full-text articles in English.

2.1.2. The Exclusion Criteria Comprised

(1) Studies utilizing AI or machine learning for periapical lesion detection with imaging modalities other than periapical radiography, panoramic radiography, or Cone-Beam Computed Tomography (CBCT);

(2) Animal studies or in vitro research; and

(3) Review articles, meta-analyses, or non-primary literature.

This study employs the PICO framework to systematically evaluate radiographic modalities for periapical lesion detection, as shown in Table 2 below.

| Component | Description |

|---|---|

| Population/Problem | Description of articles on artificial intelligence models, deep learning, and CNN |

| Intervention | CBCT, periapical radiograph, panoramic radiograph |

| Comparison | Between CBCT, periapical radiograph, and panoramic radiograph, which one is best used in a deep learning model and cnn [accuracy, validity, reliability] |

| Outcomes | As a review of the best radiographic modalities in detecting periapical lesions using AI deep learning and CNN models |

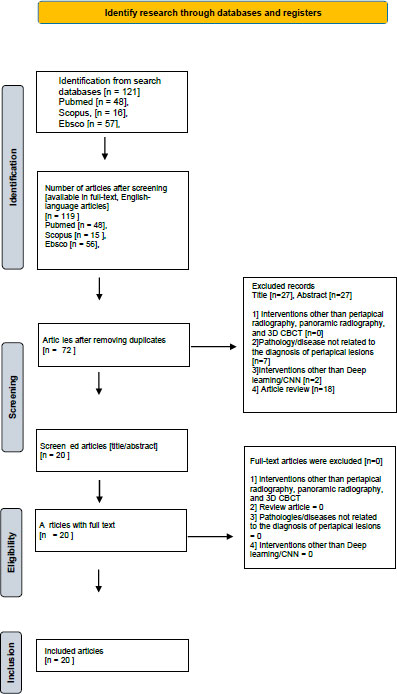

The study selection process for this systematic review follows PRISMA guidelines and is illustrated in Fig. (1).

PRISMA diagram.

Following rigorous application of the PRISMA methodology, the systematic review process yielded the following results: From an initial pool of 119 identified publications [including journal articles and conference proceedings], 72 were excluded due to duplication. Subsequent screening identified 27 additional records that did not meet the predefined inclusion/exclusion criteria, resulting in a final selection of 20 articles for comprehensive analysis in this literature review.

Following the PRISMA protocol, our systematic search identified 119 potentially relevant publications (journal articles and proceedings). After removing 72 duplicate records and excluding 27 additional publications that failed to meet our inclusion criteria, we retained 20 high-quality articles for final analysis in this systematic review.

The data synthesis was conducted through a systematic comparison of literature that met both quality assessment standards and inclusion/exclusion criteria. This analytical process specifically aimed to aggregate current evidence, critically evaluate methodologies, and synthesize findings regarding deep learning applications for periapical lesion detection across three radiographic modalities (periapical radiography, panoramic radiography, and CBCT). The synthesis focused on identifying patterns, methodological consistencies, and knowledge gaps in the application of these AI technologies for radiodiagnostic purposes.

2.2. Data Extraction

The data extraction process generated a comprehensive synthesis table containing the following key elements: 1) author name(s), 2) publication year, 3) study title, 4) sample characteristics, 5) research methodology, and 6) principal findings. This structured format enables systematic comparison of studies while maintaining methodological transparency and facilitating analysis of evidence patterns across the literature.

3. RESULTS

The final analysis included 20 cross-sectional studies conducted across seven countries (Turkey, the United Kingdom, the United States, South Korea, the Netherlands, Germany, and Switzerland). All selected publications were Scopus-indexed journals (Q1-Q3 tiers), published between 2019 and 2024 in English. These studies are systematically presented in Table 3 [3-23], which provides a comprehensive overview of their key characteristics and findings.

| Author (year)/Refs. | Title | Research Design | Journal | Country |

|---|---|---|---|---|

| İçöz, et al. [2023] [3] |

Evaluation of an artificial intelligence system for the diagnosis of apical periodontitis on digital panoramic images |

Cross-sectional | Nigerian Journal of Clinical Practice | Turkey |

| Bayrakda, et al. [2022] [4] |

A U-net approach to apical lesion segmentation on panoramic radiographs | Cross-sectional | Biomed Research International | Turkey |

| Çelik, et al. [2023] [6] |

The Role of Deep Learning for Periapical lesion Detection on panoramic radiographs | Cross-sectional | Dentomaxillofacial Radiology | United Kingdom |

| Song, et al. [2022] [7] | Deep Learning-based apical lesion segmentation from panoramic radiographs |

Cross-sectional | Biomed Research International | Turkey |

| Orhan, et al. [2023] [ 8] |

Determining the reliability of diagnosis and treatment using artificial Intelligence software with panoramic radiographs. |

Cross-sectional | Imaging Science in Dentistry | South Korea |

| Ver Berne, et al. [2023] [9] |

A deep learning approach for radiological detection and classification of radicular cysts and periapical granulomas | Cross-sectional | Journal of Dentistry | Netherlands |

| Moidu, et al. [2022] [10] |

Deep learning for categorization of endodontic lesions based on the radiographic periapical index scoring system. | Cross-sectional | Clinical Oral Investigation | Germany |

| Li et al. [2021] [11] |

Detection of dental apical lesions using CNNs on periapical radiographs. | Cross-sectional | MDPI (Multidisciplinary Digital Publishing Institute) Sensors |

Switzerland |

| Chen, et al. [2021] [12] |

Dental disease detection on periapical radiographs based on deep convolutional neural networks. | Cross-sectional | International Journal of Computer-Assisted Radiology and Surgery |

Germany |

| Pauwels, et al. [2021]. [13] |

Artificial intelligence for detection of periapical lesions on intraoral radiographs: Comparison between convolutional neural networks and human observers. | Cross-sectional | Oral and maxillofacial radiology | South Korea |

| Ari, et al. [2022] [14] |

Automatic feature segmentation in dental periapical radiographs | Cross-sectional | MDPI (Multidisciplinary Digital Publishing Institute) Sensors |

Switzerland |

| Lee et al. [2019] [15] |

Diagnosis of cystic lesions using panoramic and cone beam computed tomographic images based on a deep learning neural network | Cross-sectional | Oral Diseases | United Kingdom |

| Huang, et al [2024] [16] |

Uncertainty- based active learning by bayesian U- net for multi- label cone- beam CT segmentation. | Cross-sectional | Basic Research Technology | Philadelphia |

| Fu, et al. [2023] [17] |

Clinically oriented CBCT periapical lesion evaluation via 3D CNN algorithm. |

Cross-sectional | Journal of Dental Research | United State |

| Hadzic, [2024] [18] |

Evaluating a periapical lesion detection CNN on a clinically representative CBCT dataset-A validation study. |

Cross-sectional | MDPI [Multidisciplinary Digital Publishing Institute] Sensors |

Switzerland |

| Setzer, et al. [2020] [19] |

Artificial intelligence for the computer- aided detection of periapical lesions in cone-beam computed tomographic images. | Cross-sectional | Journal of Endodontics | United State |

| Ezhov, et al. [2021] [20] | Clinically applicable artificial intelligence system for dental diagnosis with CBCT. | Cross-sectional | Scientific reports | United Kingdom |

| Calazans, et al. [2022] [21] |

The effect of a deep-learning tool on dentists' performances in detecting apical radiolucencies on periapical radiographs | Cross-sectional | Dentomaxillofacial Radiology | United Kingdom |

| Hamdan, et al. [2022] [22] |

The effect of a deep-learning tool on dentists' performances in detecting apical radiolucencies on periapical radiographs | Cross-sectional | Hindawi Journal of Healthcare Engineering | United Kingdom |

| Wang, et al. [2022] [23] |

Deep learning- based image segmentation of cone-beam computed tomography images for oral lesion detection | Cross-sectional | Imaging Science in Dentistry | South Korea |

The systematic review identified 20 eligible articles, which are comprehensively summarized in Table 4. The analysis revealed that 17 studies [1-10, 12, 14-15, 17-20] employed Convolutional Neural Networks (CNNs), while the remaining 3 studies [11, 13, 16] utilized U-net architectures for periapical lesion detection.

| No. | Numeric Citations | Authors (Year) | Algorithm Architecture | Objective | Number of Samples | Performance of AI | Conclusion |

|---|---|---|---|---|---|---|---|

| 1. | [3] | İçöz, et al. “Evaluation of an artificial intelligence system for the diagnosis of apical periodontitis on digital panoramic images” Year published: 2023 |

Model Deep Learning berbasis Convolutional Neural Network (CNN) → algoritma: YOLO (You Only Look Once); arsitektur: YOLO-CAD [Computer-Aided Diagnosis] | To assess the diagnostic performance of an Artificial Intelligence (AI) system in identifying tooth roots affected by apical periodontitis (AP) using digital panoramic radiographs. To compare the detection accuracy of the AI system in identifying apical periodontitis between roots located in the maxillary and mandibular regions. |

306 panoramic radiographs with 400 roots diagnosed with apical periodontitis (AP) | The AI system has a recall of 0.98 for the lower jaw, but the precision (0.56) and F-measure (0.71) still needs improvement, especially for AP detection in the upper jaw. |

The YOLO-based CAD system demonstrated good Positive Predictive Value (PPV) and sensitivity for detecting mandibular apical periodontitis (AP), particularly in cases with easily identifiable lesions. However, its performance was suboptimal for maxillary AP, likely due to the complex anatomy of the maxilla and its close proximity to the maxillary sinus, which can obscure lesion boundaries. While some deep learning models have shown clinically useful diagnostic capabilities, no AI system to date fully replicates human cognitive reasoning. Nevertheless, such systems may help reduce the diagnostic burden on dentists by assisting with lesion detection. |

| 2. | [4] | Bayrakda, et al. “A U-Net Approach to Apical Lesion Segmentation on Panoramic Radiographs” Year published: 2022 |

Model Deep-Convolutional Neural Network (D-CNN) berbasis U-Net algorithm | To evaluate the performance of a deep convolutional neural network (D-CNN)-based artificial intelligence algorithm in segmenting apical lesions on dental panoramic radiographs. | A total of 470 anonymized panoramic radiographs were used | Sensitivity 0.92 Precision 0.84 F1 score 0.88 at 70% IoU value The artificial intelligence (AI) model successfully segmented 63 apical lesions on 47 radiographs in the test dataset. 12 apical lesions were not detected, whereas in 5 cases without apical lesions, the AI model still segmented lesions. |

Deep learning AI models facilitate the evaluation of periapical pathology using panoramic radiographs. Incorporating AI for the detection and segmentation of apical lesions has the potential to reduce clinicians' diagnostic workload, streamline workflow, and enhance early identification of pathologies. |

| 3. | [6] | Çelik, et al. “The role of deep learning for periapical lesion detection on panoramic radiographs” Year published: 2023 |

Model Deep Learning berbasis CNN. Digunakan 10 macam deep-learning models, atara lain: Faster R-CNN, RetinaNet, YOLOv3, SSD, Libra R-CNN, Dynamic R-CNN, Cascade R-CNN, FoveaBox, SABL, dan ATSS | To investigate the capability of deep learning algorithms in the automatic detection of periapical lesions on panoramic radiographic images. To compare the diagnostic performance of ten distinct deep learning models in identifying periapical lesions on panoramic radiographs, with the aim of determining the most accurate and reliable architecture for clinical application. |

Digunakan total 357 radiografi panoramik yang dianonimkan untuk analisis deteksi lesi periapikal secara otomatis | Deep learning was successful in detecting periapical lesions on panoramic radiographs with precision values of 0.832 - 0.953; accuracy between 0.673 and 0.812 for all models; F1 score: 0.8 - 0.895. The RetinaNet model provided the best detection performance with an mAP of 0.91. In addition, several other models, such as Adaptive Training Sample Selection, also achieved the highest F1 score of 0.895. |

This study employed ten distinct deep learning models to detect periapical lesions on panoramic radiographs. Their performance was compared against previous studies, expert assessments, and external datasets. The findings support that deep learning models can reliably and effectively identify periapical lesions, demonstrating their potential as valuable diagnostic tools in clinical practice. |

| 4. | [7] | Song, et al. “Deep learning-based apical lesion segmentation from panoramic radiographs” Year published: 2022 |

Model deep CNN berbasis U-Net algorithm model | To assess the segmentation performance and diagnostic accuracy of a pretrained U-Net architecture in identifying apical lesions on panoramic radiographic images, with a focus on its clinical applicability and robustness across varied lesion presentations. | A total of 1,000 panoramic radiographs showing apical lesions were used and divided into training [n=800, 80%], validation [n=100, 10%], and test [n=100, 10%] datasets. | An IoU threshold of 0.3 was used to segment 147 of the 180 apical lesions in the test group using panoramic radiographs. With IoU thresholds of 0.3, 0.4, and 0.5, the corresponding F1 score values as a performance indicator were 0.828, 0.815, and 0.742. | This study highlights the potential of a guided deep learning approach for apical lesion segmentation. Among the evaluated models, the deep CNN based on the U-Net architecture demonstrated notably high performance in detecting apical lesions, reinforcing its applicability in dental diagnostic workflows. |

| 5. | [8] | Orhan, et al. “Determining the reliability of diagnosis and treatment using artificial intelligence software with panoramic radiographs.” Year published: 2023 |

Model deep CNN berbasis U-Net algorithm model → U-Net-like dan Mask R-CNN | To evaluate the accuracy of an artificial intelligence (AI) program in identifying dental conditions using panoramic radiographs. To assess the effectiveness of the AI system in radiographic interpretation. To determine the clinical appropriateness of the AI-generated treatment recommendations. |

Panoramic radiographs of 100 patients [representing 4497 teeth] with known clinical examination findings were randomly selected from a university database. | When compared to ground truth, the AI demonstrated nearly flawless agreement (exceeding 0.81) in the majority of evaluations. Sensitivity was low for evaluating caries, periapical lesions, pontic cavities in root canals, and overhanging restorations, but it was very high [above 0.8] for evaluating healthy teeth, artificial crowns, dental calculus, missing teeth, fillings, lack of interproximal contact, periodontitis, and implants. |

The synthesized findings suggest that AI-based decision support systems can serve as valuable adjunct tools for detecting dental conditions when applied to panoramic radiographs. Their integration into clinical workflows may enhance diagnostic accuracy and efficiency in dental practice. |

| 6. | [9] | Ver Berne, et al. “A deep learning approach for radiological detection and classification of radicular cysts and periapical granulomas” Year published: 2023 |

Model deep learning berbasis CNN | To address the diagnostic challenge faced by dentists and oral surgeons in differentiating radicular cysts from periapical granulomas on panoramic radiographs. To highlight the clinical significance of accurate differentiation, given that radicular cysts typically require surgical intervention, whereas periapical granulomas are generally managed with root canal treatment. To evaluate the potential of automated diagnostic tools in supporting clinical decision-making for endodontic pathology. |

Panoramic images of 80 radicular cysts and 72 periapical granulomas located in the mandible were used. In addition, 197 normal images and 58 images with other radiolucent lesions were selected to improve the model's robustness. | For radicular cysts: Sensitivity: 1.00 (95% CI 0.63-1.00) Specificity: 0.95 (95% CI 0.86-0.99) AUC: 0.97 For periapical granuloma: Sensitivity: 0.77 (95% CI 0.46-0.95) Specificity: 1.00 (95% CI 0.93-1.00) AUC: 0.88 The average precision for network localization was 0.83 for radicular cysts and 0.74 for periapical granulomas. |

This study presents a workflow for the automatic differentiation and localization of radicular cysts and periapical granulomas on panoramic radiographs, demonstrating reliable clinical performance. Notably, it is the first study to replace pure histologic examination as the reference standard, marking a significant shift in diagnostic methodology. The application of deep learning models in this context has the potential to enhance diagnostic efficacy for apical lesions, contributing to more efficient referral strategies and improved treatment outcomes. |

|

7. |

[10] |

Moidu, et al. “Deep learning for categorization of endodontic lesions based on radiographic periapical index scoring system.” Year published: 2022 |

CNN dengan arsitektur YOLO (You Only Look Once) versi 3 |

To develop a CNN model capable of accurately predicting endodontic lesions on periapical radiographs based on Periapical Index (PAI) scores, with the aim of supporting diagnostic accuracy and treatment planning in endodontics. To compare the predictive performance of the developed CNN model against PAI scores assigned by three experienced endodontists, evaluating the model's diagnostic agreement with expert assessment. |

Number of digital intraoral periapical radiology (IOPAR) samples used: Total Periapical Root Areas (PRA): 3540 Total digital intraoral periapical radiographs (IOPAR): 2200 |

Model accuracy: 86.3% Sensitivity/Recall: 92.1% Specificity: 76% Positive Predictive Value/Precision: 86.4% Negative Predictive Value: 86.1% F1 Score: 0.89 Matthews Correlation Coefficient: 0.71 |

The use of CNN models for classifying endodontic lesions based on radiographic PAI scores shows significant potential to support clinical diagnosis and treatment planning. Although limitations such as a relatively small training dataset and occasional misclassification of specific PAI scores were observed, the model still achieved high accuracy, indicating its promise for clinical application. |

|

8. |

[11] |

Li et al “Detection of Dental Apical Lesions Using CNNs on Periapical Radiograph. “ Year published: 2021 |

Convolutional Neural Network (CNN) architecture |

To develop an effective and efficient diagnostic approach for dental apical lesions through the application of CNNs on periapical radiographic images, with the goal of enhancing the speed and accuracy of clinical decision-making. To compare various deep learning methodologies, particularly transfer learning techniques utilizing CNN architectures, for the detection of dental apical lesions on periapical radiographs. |

460 periapical radiographs |

Accuracy 92.75%, sensitivity 94.87%, specificity 90%, precision 92.5% Recall 94.87% |

This study proposed two hypotheses to enhance detection success, emphasizing the role of image preprocessing-specifically, the use of a Gaussian filter-to improve image quality and cropping precision. The first hypothesis, focused on enhancing imaging data to better highlight periapical lesion features, proved particularly effective. The findings demonstrated that periapical lesions can be automatically detected and graded with a success rate of 92.75%. This advancement suggests a shift from manual to automated identification of apical lesions on periapical radiographs, marking a significant step forward in diagnostic efficiency. |

|

9. |

[12] |

Chen, et al. “Dental disease detection on periapical radiographs based on deep convolutional neural networks.” Year published: 2021 |

Using CNN's with the area evaluation technique to detect disease in dental periapical radiographs |

To train CNNs for the detection of lesions on periapical radiographs, and To evaluate their diagnostic performance across various disease categories, lesion severities, and model training strategies. |

2900 Periapical radiograph |

To compare diagnostic indices such as area under the ROC curve (AUC), sensitivity, specificity, and confusion matrix between models trained using panoramic and CBCT images, and enhance OCL detection and diagnosis performance based on transfer learning. Comparing the identification and diagnosis of the three primary forms of odontogenic cystic lesions [OCLs]-odontogenic keratocysts, dentigerous cysts, and periapical cysts-using panoramic and Cone-Beam Computed Tomography (CBCT) images. Using the DICE index to compare the lesion detection, sensitivity, precision, and voxel matching accuracy of two active learning algorithms (BALD and ME) with a non-AL technique (Bayesian U-Net) |

Deep CNNs can effectively identify pathologies on clinical dental periapical radiographs. This study indicates that CNN performance improves when tailored training techniques are applied for specific disease types. Additionally, the models tend to more accurately detect lesions with higher severity levels, highlighting their potential utility in prioritizing cases requiring urgent attention. |

|

10. |

[13] |

Ruben Pauwels, MS, PhD, a, b Danieli Moura Brasil, DDS, MS, PhD,c Mayra Cristina Yamasaki, DDS, MS, PhD,c Reinhilde Jacobs, DDS, MS, PhD,b,d Hilde Bosmans, MS, PhD,b Deborah Queiroz Freitas, DDS, MS, PhD,c and Francisco Haiter-Neto, DDS, MS, PhD “Artificial intelligence for detection of periapical lesions on intraoral radiographs: Comparison between convolutional neural networks and human observers.” Year published: 2021 |

CNN architecture for periapical lesion detection on intraoral radiographs |

To explore the potential of artificial intelligence in enhancing the detection of periapical lesions on intraoral radiographs, and to compare the diagnostic performance of trained Convolutional Neural Network (CNN) models with that of human observers. |

Periapical radiographs of 10 prepared sockets in bovine ribs for simulation of periapical lesions |

1. The use of random samples shows that the CNN model shows perfect accuracy for the validation data. 2. When the data were divided by socket, the average sensitivity, specificity, and receiver operating curve area under the curve [ROC-AUC] values for the CNN model were 0.79, 0.88, and 0.86, while when the data were divided by filter, those values were 0.87, 0.98, and 0.93. 3. For radiologic, the sensitivity, specificity, and ROC-AUC values were 0.58, 0.83, and 0.75, respectively |

CNNs demonstrated strong potential in detecting periapical lesions on intraoral radiographs, achieving reasonable accuracy on validation datasets and, in some instances, outperforming human observers after being trained on multiple socket examples. Notably, lesion simulation improved training effectiveness. However, further external validation and clinical testing are necessary before such models can be reliably implemented in routine dental practice. |

| 11. | [14] | Ari, et al. “Automatic Feature Segmentation in Dental Periapical Radiographs” Year published: 2022 |

U-Net, which is one type of deep learning model effective in medical image segmentation | To explore the potential of AI models in supporting clinical diagnosis using dental periapical radiographs. To compare the performance of deep learning architectures, including U-Net combined with DenseNet-121, in segmenting carious lesions and alveolar bone loss. To evaluate the accuracy and effectiveness of AI by comparing U-Net segmentation results with manual segmentations by dental radiologists. |

1169 Periapical radiographic images taken from adults |

Carious lesions: sensitivity 0.82, precision 0.82, F1 score 0.82. Dental crown: sensitivity 1, precision 1, F1 score 1. Dental pulp: sensitivity 0.97, precision 0.87, F1 score 0.92. Periapical lesions: sensitivity 0.92, precision 0.85, F1 score 0.88. Root canal filling: sensitivity 1, precision 0.96, F1 score 0.98 |

A CNN-based deep learning system can effectively assist dentists with feature segmentation on periapical radiographic images. With sufficient training data, the model can accurately detect and distinguish key anatomical and pathological features. As training data volume increases, diagnostic accuracy improves, enabling clinicians to allocate their time more efficiently and potentially accelerating the diagnostic process. |

| 12. | [15] | Lee et al. “Diagnosis of cystic lesions using panoramic and cone beam computed tomographic images based on a deep learning neural network” Year published: 2019 |

GoogLeNet Inception-v3 architecture. Using a deep convolutional neural network (CNN) architecture |

To compare the identification and diagnosis of three primary odontogenic cystic lesions, odontogenic keratocysts, dentigerous cysts, and periapical cysts, using panoramic radiographs and Cone Beam Computed Tomography (CBCT) images. To evaluate lesion detection performance using the DICE index, sensitivity, precision, and voxel matching accuracy across two active learning algorithms (BALD and ME) and a non-active learning technique (Bayesian U-Net). |

2,126 images, consisting of 1,140 [53.6%] panoramic images and 986 [46.4%] CBCT images |

Panoramic image: 84.7% accuracy [95% confidence interval: 76.0%-91.1%]. CBCT: accuracy 91.4% [95% confidence interval: 84.1%-96.1%]; AUC 0.914, sensitivity 96.1%, specificity 77.1%. - CBCT showed significantly higher performance than panoramic images, which had an AUC of 0.847, a sensitivity of 88.2%, and Specificity of 77.0%. |

Deep CNN architectures, such as GoogLeNet Inception-v3, have shown strong performance in detecting and diagnosing odontogenic keratocysts (OKCs), dentigerous cysts, and periapical cysts using both panoramic and CBCT images. Notably, models trained on CBCT images demonstrated superior diagnostic accuracy compared to those trained on panoramic radiographs, highlighting the advantages of volumetric imaging for lesion detection and classification. |

| 13. | [16] | Huang, et al “Uncertainty-based Active Learning by Bayesian U-Net for Multi-label Cone-beam CT Segmentation.” Year published:2024 |

Using a multilabel Bayesian U-Net model with 4 layers in the encoder and decoder | To extend two active learning algorithms for multi-label CBCT image segmentation and comprehensively assess their lesion detection, sensitivity, precision, and voxel matching accuracy using the DICE index, comparing these results to a non-active learning method (Bayesian U-Net). | 100 slice CBCT | BALD: 84% sensitivity, 90% Specificity, 87.5% precision |

Further integration of Active Learning (AL) can significantly reduce the manual labelling burden required to train AI models for biomedical image analysis in dentistry. In this study, two AL strategies-Bayesian U-Net with BALD (Bayesian Active Learning by Disagreement) and Maximum Entropy (ME)-demonstrated improved segmentation accuracy and lesion detection performance on CBCT images. These uncertainty-based methods enhance model efficiency by prioritizing the most informative samples for annotation. |

|

14. |

[17] |

Fu, et al. “Clinically Oriented CBCT Periapical Lesion Evaluation via 3D CNN Algorithm.” Year published: 2023 |

Using 3D CNN PAL-NET for apical lesion detection and segmentation |

To introduce and evaluate the performance of a novel 3D convolutional neural network, PAL-Net, for the automatic detection and segmentation of periapical lesions associated with apical periodontitis. |

441 CBCT |

PAL-Net showed excellent performance compared to senior dentists and helped junior dentists reduce lesion diagnosis time. Interobserver and intraobserver agreement: 0.72 and 0.78. Sensitivity: 99%. Segmentation: DSC 0.87; average precision 0.86; F1 0.85. Diagnostic performance: Sensitivity 0.99; PPV 0.8; F1 0.88; AUC increased from 0.88 to 0.95 for junior dentists, and decreased from 0.93 to 0.92 for senior dentists. Robustness on 3 external data: Sensitivity 0.94, 0.9, 0.88; PPV 0.92, 0.90, 0.72; AUC 0.96, 0.95, 0.93. |

PAL-Net significantly enhanced the diagnostic performance of junior dentists by reducing diagnosis time and delivering clinically relevant segmentation for Apical Periodontitis (AP) lesions. The model also demonstrated strong generalizability and robustness in detecting lesions across a range of volumes, underscoring its potential for effective integration into clinical decision-support systems. |

|

15. |

[18] |

Hadzic, et al. “Evaluating a Periapical Lesion Detection CNN on a Clinically Representative CBCT Dataset: A Validation Study.” Year published: 2024 |

CNN |

To evaluate the performance of periapical lesion detection algorithms using clinical CBCT datasets. To determine whether these algorithms perform at least as well as expert clinicians in detecting periapical lesions. |

195 CBCT |

The periapical lesion detection algorithm (PAL) showed a sensitivity of 86.7% and specificity of 84.3%. The non-inferiority test rejected the null of inferiority for specificity but not for sensitivity. Sensitivity was low in lesions <1 mm, but increased to 90.4% in lesions with |

A deep learning-based algorithm for periapical lesion detection demonstrated promising performance on clinical CBCT datasets. While not yet fully robust to imaging artefacts and outliers, the model shows strong potential to aid clinicians in reducing missed diagnoses-particularly for lesions greater than 1 mm-thereby supporting more accurate and timely interventions. |

|

16. |

[19] |

Setzer, et al. “Artificial Intelligence for the Computer-aided Detection of Periapical Lesions in Cone-beam Computed Tomographic Images.” Year published: 2020 |

Based on the use of U-Net focused on multilabel segmentation to identify 5 different labels: Periapical lesions, tooth structure, bone, restorative materials, and background. |

To evaluate the capability of Deep Learning algorithms in detecting periapical lesions on CBCT images. To identify potential areas for improving the accuracy and efficiency of these algorithms. To compare automated lesion segmentation by Deep Learning models with clinical segmentation performed by clinicians. |

20 CBCT |

93% with a specificity of 0.88 |

The deep learning algorithm trained within a flattened CBCT environment achieved excellent accuracy in lesion detection. This study demonstrates that applying deep learning to periapical lesion detection on CBCT images can significantly enhance both detection and segmentation accuracy, reinforcing the value of CBCT preprocessing techniques in optimizing AI performance. |

|

17. |

[20] |

Ezhov, et al. “Clinically applicable artificial intelligence system for dental diagnosis with CBCT.” Year published: 2021 |

Uses CNN based on the latest architecture to perform specific segmentation and detection tasks. |

To develop an AI system for dental diagnosis using CBCT images. To compare the diagnostic performance of two groups of dentists analyzing 30 CBCT scans: one assisted by AI [Diagnocat] and one without AI assistance. |

1346 CBCT scans |

AI achieved a sensitivity of 0.8537 and a specificity of 0.9672, while without AI, the sensitivity was 0.7672 and the specificity was 0.9616. The AI system has higher accuracy in dental diagnosis using CBCT images than human diagnosis |

The integration of AI software, such as Diagnocat, significantly enhanced dental diagnostic accuracy compared to assessments made by non-AI-assisted human observers. This highlights the potential of AI tools to support more precise and consistent clinical decision-making in dentistry. |

|

18. |

[21] |

Calazans., et al. “Automatic Classification System for Periapical Lesions in Cone-Beam Computed Tomography” Year published: 2022 |

Combining CNN and Siamese Concatenated Network with the aim of performing joint analysis of a pair of images using sagittal and coronal sections evaluated together to assess the presence or absence of periapical lesions. |

To develop an automatic classification system capable of distinguishing healthy teeth from endodontic lesions using CBCT images. To compare the system’s performance with previous studies, particularly focusing on differentiation using Siamese network architectures. |

1000 CBCT sagittal and coronal sections |

Complete dataset: DenseNet [70% accuracy, F1 score 0.69, spec 0.76, precision 0.75, recall 0.64] VCG-16 [Accuracy 68%, F1 score 0.68, spec 0.72, precision 0.72, recall 0.64] No lesion and large lesion [> 2mm] DenseNet [79% accuracy, F1 score 0.66, spec 0.92, precision 0.80, recall 0.55] VCG-16 [81% accuracy, F1 score 0.66, spec 0.91, precision 0.74, recall 0.59] No lesions and small lesions [0.5-1.9 mm] DenseNet [Accuracy 66.67%, F1 score 0.45, spec 0.85, precision 0.60, recall 0.34] VCG-16 [66% accuracy, F1 score 0.43, spec 0.80, precision 0.50, recall 0.38] |

The experimental group demonstrated significantly higher CBCT image segmentation accuracy compared to the control and blank groups [P < 0.05]. The FCNN deep learning algorithm also reduced segmentation time [10.2 ± 1.4 min] compared to the conventional method [16.3 ± 1.6 min]. This framework is notable for its innovation, as no prior machine learning-based classification system exists for dental images that considers the specific features used in this study. Detecting periapical lesions remains a complex challenge, especially given the small lesion sizes in the UFPE dataset. To address this, lesions were categorized by size to better analyze outcomes. For context, a study by Kruse et al. reported only 63% diagnostic accuracy among human specialists for treated maxillary lesions, highlighting the potential of AI-assisted methods. |

|

19. |

[22] |

Hamdan, et al. “The effect of a deep-learning tool on dentists' performances in detecting apical radiolucencies on periapical radiographs.” Year published: 2022 |

Deep Learning model based on Convolutional Neural Network (CNN), using Denti.AI software |

To evaluate the efficacy of deep learning models in assisting dentists with the detection of apical radiolucency on periapical radiographs. To compare the diagnostic accuracy and outcomes of intraoral radiograph interpretation with and without deep learning system assistance. |

68 intraoral periapical radiographs that have proven the presence or absence of apical radiolucent lesions by CBCT scanning |

This study reported a clinically important 8.6% improvement in lesion detection accuracy when aided by the AI system compared to unaided reading. In addition, the AI system was able to detect 92.8% of periapical lesions in another study, demonstrating the potential of deep learning algorithms in improving diagnoses. |

This study highlights the potential of the Denti.AI system in aiding dentists with the detection and localization of apical lesions on intraoral radiographs. An AFROC improvement of 8.6% and a clinically meaningful increase in AUC underscore its diagnostic value. Further research involving larger and more diverse cohorts of readers and cases is needed to strengthen the evidence of deep learning’s impact on lesion detection and reader performance. |

| 20. | [23] | Wang, et al. “Deep Learning-Based Image Segmentation of Cone-Beam Computed Tomography Images for Oral Lesion Detection” Year published: 2021 |

Full Convolutional Neural Network [FCNN] for image segmentation of oral lesions on CBCT images | To compare the effectiveness of deep learning segmentation algorithms with traditional segmentation methods. To evaluate and compare the accuracy, segmentation time, volume, and surface area of oral lesions generated by different segmentation techniques. |

90 patients with oral lesions on CBCT scans | 1. The number of patients with various oral lesions did not significantly differ between the three groups [P > 0.05]. 2. The experimental group [FCNN DL] achieved 98.3% lesion segmentation accuracy, compared to 78.4% and 62.1% for the blank group [manual segmentation method] and control group [threshold-based segmentation algorithm], respectively. 3. Compared to the blank group, the experimental and control groups’ segmentation effects on lesions and lesion models are superior. 4. Compared to the control and blank groups, the experimental group’s CBCT picture segmentation accuracy was significantly greater [P < 0.05]. 5. Compared to the conventional segmentation approach [16.3 ± 1.6 min], the FCNN DL algorithm segmentation time was shorter [10.2 ± 1.4 min]. 6. The FCNN DL algorithm determined no discernible difference between the blank group and the lesion volume and surface area. |

The FCNN algorithm enhances the accuracy, efficiency, and overall quality of oral lesion segmentation on CBCT images, outperforming traditional methods such as manual and threshold-based segmentation. . |

4. DISCUSSION

Periapical radiography remains one of the most commonly used imaging modalities in dental practice. It offers high-resolution visualization of dental structures, fast image acquisition for immediate clinical interpretation, relatively low radiation exposure, and is cost-effective compared to more advanced modalities like CBCT. According to Song et al., panoramic radiographs are commonly used to diagnose apical lesions and have relatively low radiation doses compared to CBCT. These advantages support its continued use as a frontline diagnostic tool [7].

However, the two-dimensional nature of periapical radiographs imposes inherent limitations. This modality does not provide volumetric anatomical detail, which can lead to missed diagnoses, particularly when anatomical structures overlap. Bayrakdar et al. pointed out that panoramic radiographs, being two-dimensional, may fail to detect certain pathologies due to anatomical superimposition [4]. Moreover, periapical radiographs are highly technique-sensitive; improper angulation or patient positioning may cause geometric distortion. İçöz et al. further noted that these techniques are limited by the nature of two-dimensional imaging for three-dimensional structures, compounded by factors such as anatomic noise, superimposition, and distortion effects [3].

Considering both the advantages and limitations, periapical radiography remains highly valuable for initial diagnostic screening, while CBCT should be reserved for complex cases that require comprehensive three-dimensional anatomical assessment.

In the analysis of periapical radiographs using a deep learning approach on a dataset of 1,169 samples, Fu, et al. [2024] reported a sensitivity of 87–90% for detecting dental caries, crowns, and dentin pulp; 85–92% for identifying periapical lesions; and 96–98% for detecting root canal fillings. In the study by Chen, et al [2021], improved results were observed in the detection of advanced periapical periodontitis [12]. Meanwhile, Hamdan, et al [2022], in a study involving 68 periapical radiographs validated with Cone Beam Computed Tomography, found that sensitivity varied according to lesion size, 85% for small lesions measuring between 2 and 5 millimeters, and 92% for larger lesions measuring 5 millimeters or more [22]. Moidu, et al. [2022], using 540 periapical radiographs, reported a sensitivity of 92.1%, a specificity of 86.4%, and an F1 score of 0.86 [10]. Similarly, Pauwels, et al., [2021], in an analysis of 5,000 periapical radiographs, demonstrated the ability to detect lesions and estimate their size within 1 minute 45 seconds to 3 minutes, achieving a sensitivity between 79% and 100% and a specificity between 88% and 100% [13].

Elaborating further, Ver Berne et al. [2023] implemented deep learning on 255 panoramic radiographs [197 normal and 58 with radiolucent lesions], achieving 95% sensitivity and specificity for detecting radicular cysts, and 77% sensitivity with 100% specificity for granulomas [9]. Lee, et al. evaluated 34 patients with odontogenic cysts and keratocysts using both panoramic and CBCT imaging, reporting CBCT sensitivity of 96.1% and specificity of 77.2%, compared with 88.2% sensitivity and 77% specificity with panoramic images [15]. Song, et al., using 180 panoramic radiographs, identified 147 lesions with an F1 score of 0.82 [7]. Bayrakdar, I. S. et al. analyzed 470 radiographs and reported 92% sensitivity, 84% precision, and an F1 score of 0.8 4. Orhan Kan et al. examined 4,497 teeth with CBCT to assess various dental conditions, achieving 80% sensitivity for detecting healthy and treated teeth, though performance declined for pathological cases [8]. İçöz, et al. evaluated 306 panoramic images [400 roots] with apical periodontitis, achieving an F1 score of 0.71. Their AI model detected 119 mandibular and 56 maxillary lesions, with a recall of 0.98, precision of 0.56, and F-measure of 0.71 [3].

In a deep learning CNN-based study by Fu, et al. [2024], CBCT examinations involving 4,951 teeth [563 with apical periodontitis and 4,388 without achieved a sensitivity of 88–94% [17]. The CNN model significantly reduced diagnostic time by 69.9 minutes for junior endodontists and 32.4 minutes for senior endodontists. Meanwhile, Wang, X. et al., using 90 CBCT scans, reported 98% accuracy in detecting apical periodontitis (38%), periapical infections (17%), and dental pulp conditions (29%) [23]. Ezhov, et al., analyzing 1,346 CBCT images, achieved 85% sensitivity and 96% specificity in identifying periapical lesions [20]. Further, Calazans, M.A. et al., using 5,343 CBCT scans, categorized 454 as normal, 276 as small lesions [0.5–1.9 mm], and 270 as large lesions [≥3 mm] [21]. Setzer, et al., evaluating 61 roots, reported 93% accuracy and 88% specificity [19]. Huang, et al. compared three deep learning models, such as BALD, ME, and Bayesian U-Net, on 100 CBCT slices. BALD exhibited the highest sensitivity (84%), ME achieved the highest specificity (96.3%) and precision (95%) [16]. Hadzic et al. [2023] reported 86.7% sensitivity and 84.3% specificity for CBCT-based detection of periapical lesions [18].

The diagnostic capabilities of junior dentists are significantly enhanced through the integration of artificial intelligence and deep learning techniques applied to CBCT imaging, thereby markedly reducing diagnostic time. Moreover, these methods produce clinically relevant segmentation outcomes [17]. CNN algorithms in CBCT image segmentation have been shown to improve the accuracy, efficiency, and overall quality of oral lesion segmentation compared to conventional approaches such as manual and threshold-based segmentation [23]. DL applications in periapical lesion detection on CBCT images also demonstrate the potential to enhance diagnostic precision and segmentation quality [19]. In a study by Calazan et al., the combination of CNN with a Siamese Concatenated Network for classifying the presence or absence of periapical lesions in CBCT images achieved an accuracy of 70% [21]. This combined model shows promise as an effective automated classification approach and could support clinicians in achieving faster, more accurate diagnoses of periapical lesions in clinical practice.

In sum, integrating artificial intelligence with panoramic, periapical, and CBCT imaging modalities significantly enhances diagnostic accuracy and operational efficiency in identifying periapical lesions. By applying machine learning algorithms trained on heterogeneous, comprehensive datasets, these systems can assist clinicians in detecting lesions as small as 1 millimeter and distinguishing between benign and malignant pathological conditions. This technological advancement offers several notable benefits, including expedited diagnostic processes, reduced likelihood of human diagnostic errors, and improved precision in identifying cystic lesions. Nevertheless, it is imperative to underscore that such systems are intended to function as supportive tools within clinical practice rather than as replacements for clinical judgment. Accordingly, the responsibility for diagnosis and therapeutic decision-making must reside with qualified dental professionals.

CONCLUSION

In conclusion, artificial intelligence holds the potential to transform the diagnosis of periapical lesions by augmenting the diagnostic capabilities of panoramic, periapical, and CBCT imaging modalities. While each modality offers distinct advantages and limitations, integrating AI with radiographic technologies introduces a new paradigm for diagnostic precision, treatment planning, and clinical outcomes in endodontics. Hence, understanding this potential requires ongoing collaboration among dental practitioners, oral and maxillofacial radiologists, and artificial intelligence researchers. Notably, AI systems demonstrate superior performance in evaluating periapical lesions in the mandible compared to those in the maxilla. Additionally, periapical radiographs offer greater sensitivity in detecting smaller lesions than panoramic radiographs, whereas CBCT provides the highest diagnostic accuracy, particularly for identifying periapical and odontogenic cystic lesions, including odontogenic keratocysts, dentigerous cysts, and periapical cysts. Importantly, the application of AI contributes to the early detection of periapical lesions, particularly those measuring as small as 1 millimeter, thereby reducing the likelihood of missed diagnoses and supporting more effective clinical decision-making.

Hence, artificial intelligence has the potential to revolutionize the diagnosis of periapical lesions by enhancing the diagnostic utility of panoramic, periapical, and CBCT imaging modalities. Although each radiographic modality has inherent strengths and limitations, integrating AI with these imaging techniques offers a transformative approach to clinical diagnostics. Thus far, this synergy has facilitated greater diagnostic accuracy, optimized treatment planning, and ultimately improved patient outcomes in endodontics. To fully harness AI’s capabilities in dental radiology, sustained interdisciplinary collaboration among dental clinicians, radiologists, and artificial intelligence researchers is essential. Such cooperative efforts will ensure the responsible development and implementation of AI technologies, advancing both the precision and efficacy of periapical lesion management in contemporary dental practice.

AUTHORS’ CONTRIBUTIONS

The authors confirm their contribution to the paper as follows: F.S., B.K.: Writing - Reviewing and Editing. All authors reviewed the results and approved the final version of the manuscript.

LIST OF ABBREVIATIONS

| AI | = Artificial Intelligence |

| CBCT | = Cone-Beam Computed Tomography |

| DL | = Deep Learning |

| CNN | = Convolutional Neural Networks |

| AP | = Apical Periodontitis |

| PPV | = Positive Predictive Value |

| PAI | = Periapical Index |

| AUC | = Area Under the ROC Curve |

| OCLs | = Odontogenic Cystic Lesions |

| ROC-AUC | = Receiver Operating Curve Area Under the Curve |

| OKCs | = Odontogenic Keratocysts |

| AL | = Active Learning |

| BALD | = Bayesian Active Learning by Disagreement |

| ME | = Maximum Entropy |

| FCNN | = Full Convolutional Neural Network |

AVAILABILITY OF DATA AND MATERIALS

The authors confirm that the data supporting the findings of this research are available within the article.

ACKNOWLEDGEMENTS

Declared none.